Hadoop and Spark, the popular big data frameworks, work together. But they are distinct with specific business use cases. A brief introduction of these two big data platforms is given here.

Hadoop and Spark are the two big data frameworks that continue to gain attraction among data engineering professionals and organizations to manage data volume, variety, and velocity. Let’s compare the two frameworks in brief.

Hadoop and Spark are open-source frameworks to prepare, process, manage, and analyze big data sets. Both are developed by the Apache Software Foundation.

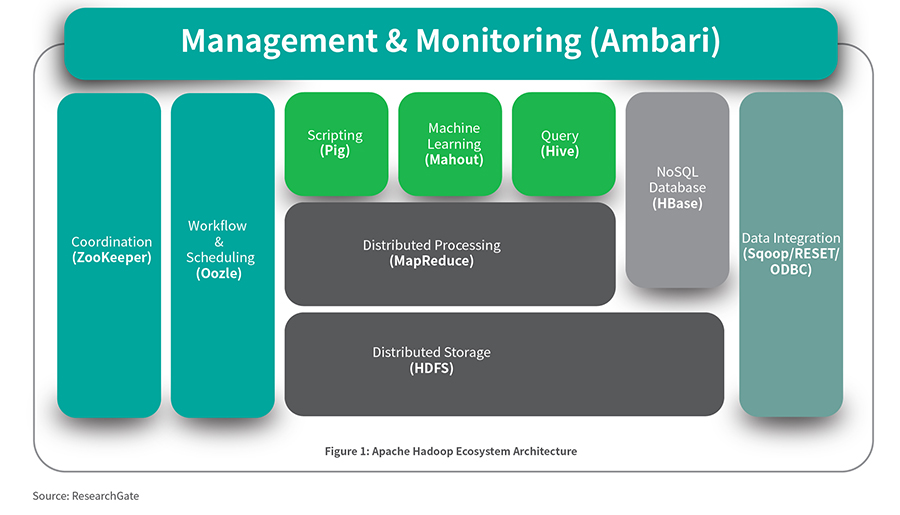

Apache Hadoop, an open-source software, allows users to manage datasets of bigger sizes- gigabytes to petabytes. It can store and process structured, unstructured, and semi-structured data. It’s highly scalable and accommodates a single server and thousands of machines as well. It protects data, aids in real-time analytics, and decision-making processes.

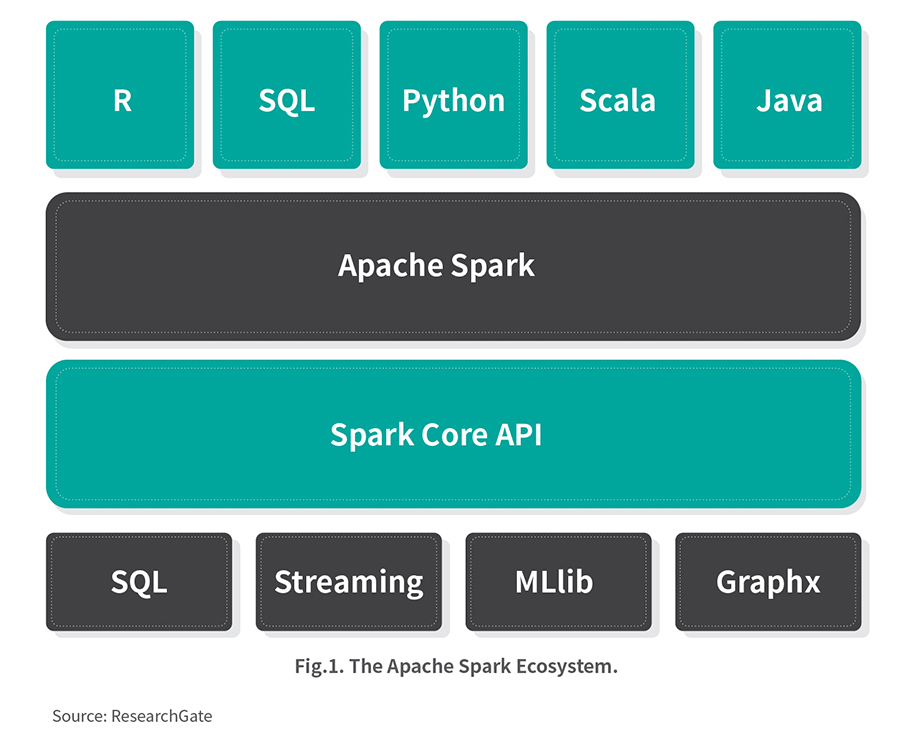

Apache Spark, also an open-source software, is a data processing engine for big data sets. It uses random access memory (RAM) to cache and process data faster than Hadoop – 100x faster! It’s a unified engine supporting SQL series, graph processing, machine learning, and data streaming. The APIs are designed in such a way that it’s easy to use for data transformation and semi-structured data manipulation.

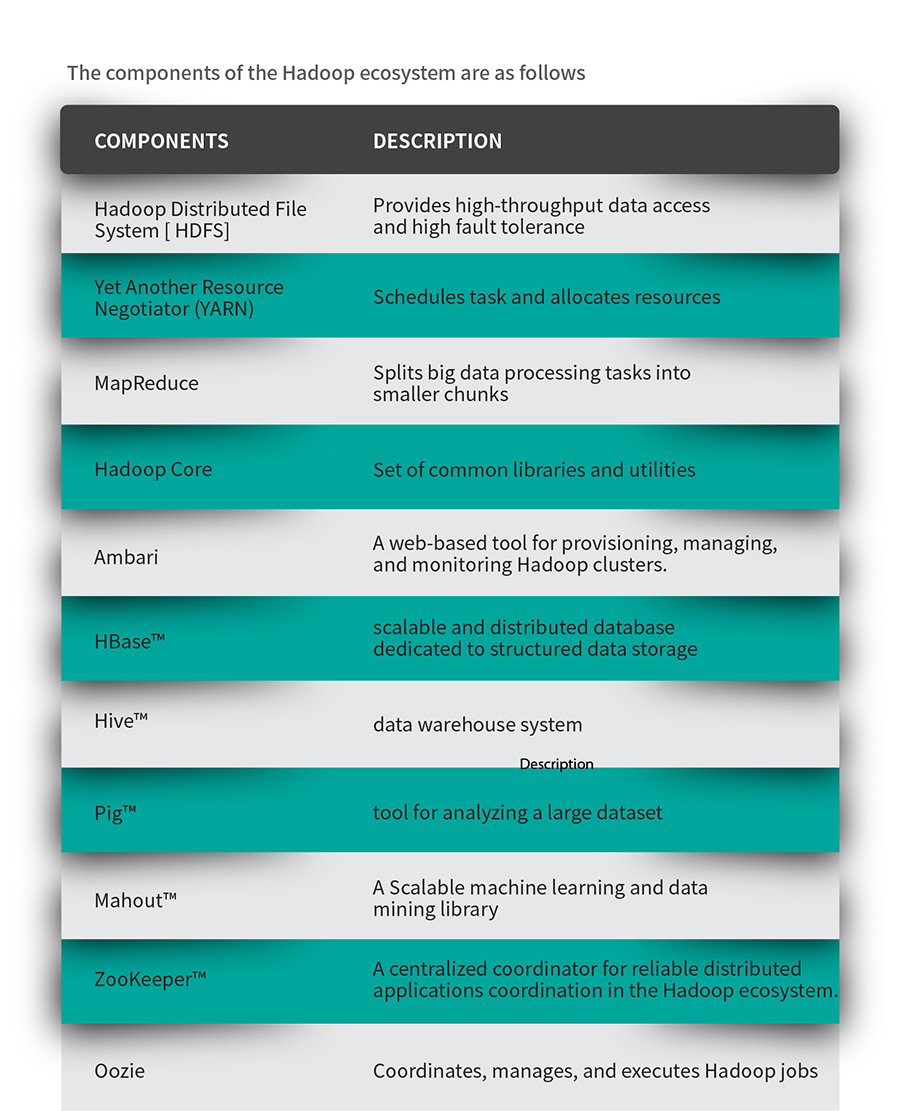

Hadoop, an efficient open-source big data platform, is characterized by high scalability and fault tolerance. It comprises four subprojects namely, Hadoop Distributed File System, YARN, and common Hadoop utilities like HBase, Zookeeper, etc. In addition, distributors can add their own proprietary add-ons to improve the ecosystem. For instance, Cloudera uses Cloudera Manager, IBM has Big SQL, and so forth.

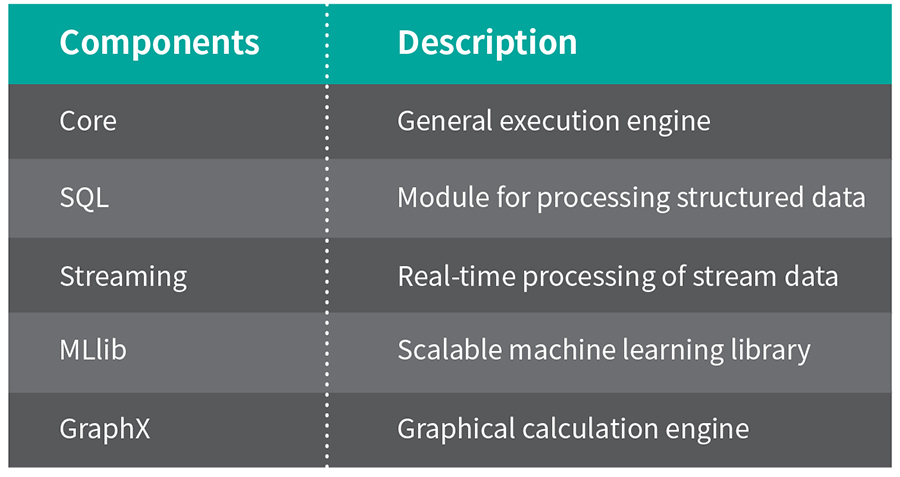

The components of the Spark ecosystem are as follows:

Spark is the next-generation data processing engine rich with APIs: Python, R, Scala, Java, and SQL. It supports intensive computational tasks, machine learning, flows, interactive queries, and graphics processing. It uses a batch program [the technique that divides the incoming data and processes small parts one at a time] to process flows. Due to real-time processing and memory storage, the performance is better as compared to other big data technologies.

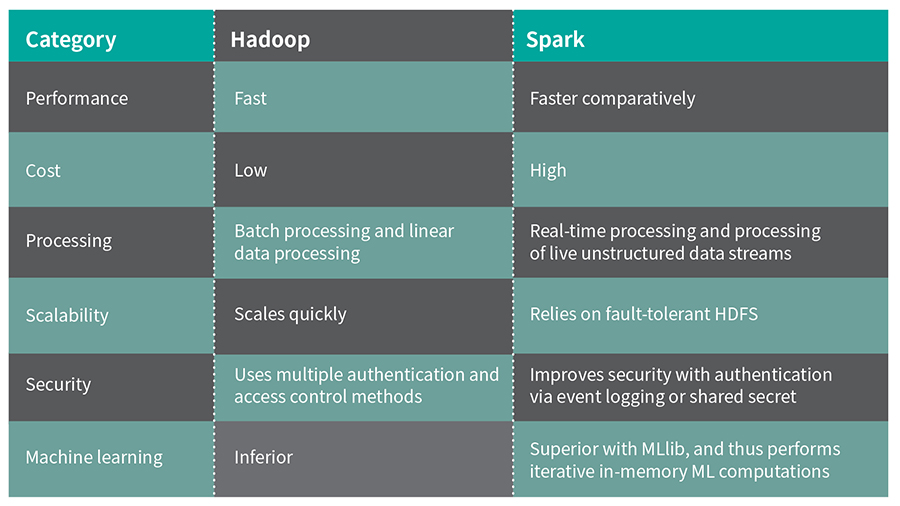

Take a closer look at the key differences between these two here:

Hadoop is expected to reach a market size of USD 340.35 billion by 2027, growing at a CAGR of 37.5 percent from 2020 to 2027, Allied Market Research reports. The key market players include MapR Technologies, Amazon Web Services Inc., Cisco Systems Inc., Dell EMC, Google LLC, Teradata Corporation, and IBM Corporation.

Apache Spark market is expected to grow at a CAGR of 33.9 percent during the forecast period 2018-2025, says Esticast Research and Consulting. The United Kingdom had garnered the highest market share [30 percent] in the European market. Asia-Pacific is expected to grow with the highest CAGR of 36.4 percent and data-tier which are more than 10 PB may grow with the highest CAGR of 36.0 percent during the forecast period. The key market players include DATA bricks, Qu bole Inc., MapR Technologies Inc., Cloudera Inc., and IBM Corporation.

Depending on the characteristic features, they are used as follows:

Hadoop-based applications are very much used by organizations requiring real-time analytics from email, video, machine-generated data. It’s highly cost-effective with minimal consumption of time that enables technical experts to perform several operations including big data analytics, big data management, and big data storage in a cloud.

Hadoop is effective for:

Spark is quite advanced in its features and functionalities, making it a popular choice among the developers and end-users. Also, the adoption and deployment of Spark are faster and it integrates seamlessly with HDFS, Hive, HBase, and Cassandra. It is anticipated that the IoT devices would drive the Spark market in the near future.

Spark is effective for:

With this basic understanding, let’s see which one to choose among these two.

Choosing the platform depends on your business needs. Hadoop is better when your focus is on security, architecture, and cost-effectiveness. Spark is recommended for business cases where performance, data compatibility, and ease of use are the focus.

Both are meant for handling data and both are entirely different frameworks. Also, they are not created to compete with one another, but to complement each other. Though there is a logical choice among these two, Hadoop and Spark work best when together.

In combination, Spark takes the Hadoop advantage i.e. file system [Hadoop stores a huge amount of data using affordable hardware]. Spark helps in the real-time processing of incoming data. Without Hadoop, historical data may get missed that Spark cannot handle. In addition, Spark leverages the security and resource management benefits of Hadoop. Spark clustering and data management are easier with YARN. The cumulative effect of these two environments is beneficial for retroactive transactional data analysis, IoT data processing, and advanced analytics.

Let’s take an example of the perfect blend of Spark and Hadoop:

Actions taken by customers:

While enjoying a ride using Uber, customers press a button, take a ride, press another button to pay the driver.

Actions taken by Uber systems:

There is a lot more going behind this customers’ simple action. Uber’s data team shares that much of the infrastructure runs on Hadoop and Spark.

The system comprises a Spark-based system called StreamIO that decouples the raw data ingest from the relational data warehouse. Instead of aggregating the trip data from various distributed data centers, Uber uses Kafka to stream change-data logs from the local data centers. Further, it loads them into the centralized Hadoop cluster. Then, the system uses Spark SQL while converting the schema-less JSON data to structured Parquet files.

Currently, Uber is investing in Spark including the MLlib and GraphX libraries for machine learning and graph analytics to process millions of events and requests per day.

Hadoop and Spark frameworks play a significant role in big data applications. Spark requires a little more budget for maintenance, but needs less hardware, and is the go-to platform considering the speed. However, some use cases need Hadoop too. Each brings its uniqueness to the table.

There are several instances where both the tools should be used, as we saw in the case of Uber. Organizations can realize the benefits of both the tools if they need batch analysis and stream analysis for their services.

For organizations, it is recommended to optimize their big data management initiatives by leveraging the unique benefits of Hadoop and Spark. For professionals, learning Hadoop and Spark is best recommended to advance their careers in big data engineering.